Over the last few years we've been seeing an increase in the use of various camera effects in games, particularly in cinematics, designed to mimic effects that we see on real cameras. I want to argue in this post that this is a bad trend that we need to move away from, but that it's also a necessary evil we need to work through in order to get to the other side, where games develop their own visual language independent from film and real world cameras.

But first, in order to better make my point, let's talk briefly about UIs (User Interfaces).

The Transition to Flat UIs

For quite a few years, UIs on computers gradually evolved fancier looking 3D designs. One of the main reasons for this was that flat, 2D UIs looked simple and cheap to most people's eyes. It was hard to tell the difference between an intentionally simple UI and a cheaply made one.

But it started getting to the point that people were feeling the the 3D was becoming excessive and wondering how far it could go.

A big change then happened when Microsoft started promoting its Metro UI (there's a longer history there that's not worth going into) and Apple introduced iOS 7. Finally it was acceptable to have flat, minimalist UIs. This has been largely helped by more sophisticated UI toolkits that allow for heavy use of animation and transforms of different kinds to keep the UI interesting without having to resort to gaudy 3D elements.

Camera Effects

I would argue that this is the same process that games have been going through, and I think we might finally be getting to the point that UIs got to when the switch to flat occurred.

Games have always tried to introduce fancy visual effects as a differentiator between budget and AAA titles, and camera effects are a big part of that. Particularly as games have become more mainstream and have had higher production quality trailers made for them, the language of film has also been brought across as a way to make them look slicker and more expensive.

Some of this language is arguably good and improves games:

- Camera cuts

- Angles and positioning

- Simulating focus and depth of field can tell the player what they should be looking at and communicate things like disorientation

- Camera movements like shaky cam can add to immersion

- Lens flares

- Dirt and grit on the lens

- Chromatic aberration

And there's also the problem of limiting the language of games by using the language of movies. Games are their own medium and need to develop their own camera language. When games mimic film in cutscenes by doing the "handheld camera" effect to appear more gritty, or limit themselves to the pan, zoom and rotation limits of real physical cameras, they miss an opportunity. Games can certainly be interactive movies if they want, but they can also be so much more than that.

The "Flat UI" Of Camera Effects

I think the industry might finally be ready to accept simple visuals as deliberate design choices rather than a sign of cheapness. Now that free games engines like Unreal Engine, CryEngine and Unity make good looking graphics accessible to indie developers and not just AAA developers, graphics are become less of a product differentiator, and certainly a less reliable way to tell a cheap game from an expensive game.

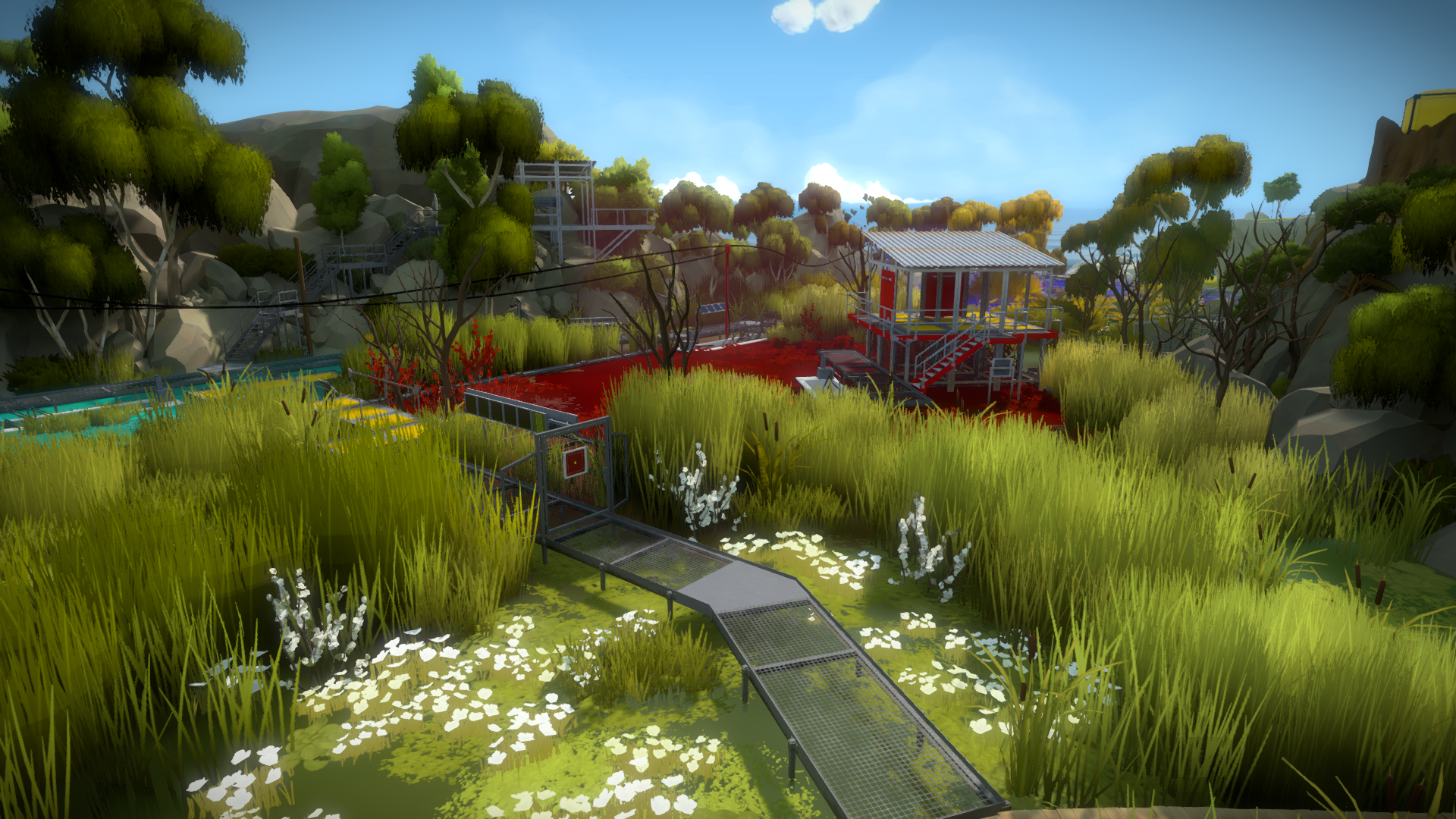

Games like The Witness have shown how you can have simple but gorgeous graphic design without using gaudy camera effects. The introduction of Virtual Reality devices to the mainstream will also force developers to not be able to rely on camera effects, as these can be quite jarring and sickening in VR. Things like shaky cam are no longer a crutch that can be relied on!

Personally, I've always been a sucker for graphics, and so I've enjoyed the excessive camera effects just as much as I've enjoyed seeing clean, simple visuals. But I do think games need to develop their own voice and identity separate to film and television, and ditching the camera effects will be a step in that direction.

No comments:

Post a Comment